Concepts Needs(几个需要掌握的概念)

1. Race Conditions ( 竞态条件 )

In some operating systems, processes that are working together may share some common storage that each one can read and write. The shared storage may be in main memory (possibly in a kernel data structure) or it may be a shared file; the location of the shared memory does not change the nature of the communication or the problems that arise. To see how interprocess communication works in practice, let us consider a simple but common example, a print spooler. When a process wants to print a file, it enters the file name in a special spooler directory. Another process, the printer daemon, periodically checks to see if so are any files to be printed, and if so removes their names from the directory.

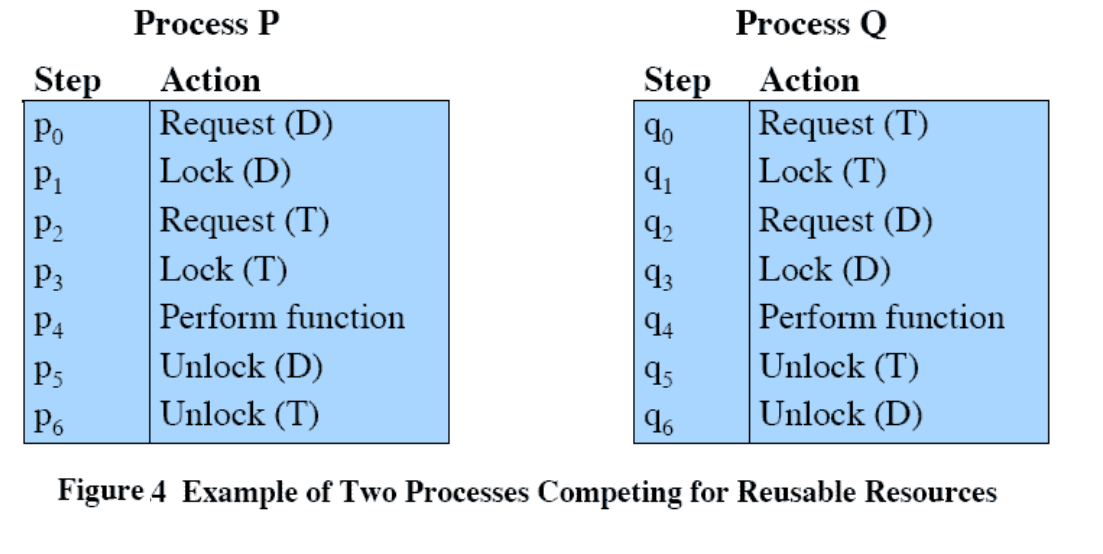

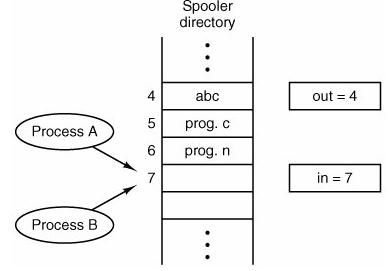

Imagine that our spooler directory has a large number of slots, numbered 0, 1, 2, ..., each one capable of holding a file name. Also imagine that there are two shared variables, out, which points to the next file to be printed, and in, which points to the next free slot in the directory. These two variables might well be kept in a two-word file available to all processes. At a certain instant, slots 0 to 3 are empty (the files have already been printed) and slots 4 to 6 are full (with the names of files to be printed). More or less simultaneously, processes A and B decide they want to queue a file for printing. This situation is shown in Fig. 2-8.

In jurisdictions where Murphy's law[] is applicable, the following might well happen. Process A reads in and stores

the value, 7, in a local variable called next_free_slot. Just then a clock interrupt occurs and

the CPU decides that process A has run long enough, so it switches to process B. Process B also reads

in, and also gets a 7, so it stores the name of

its file in slot 7 and updates in to be an 8.

Then it goes off and does other things.

2. Critical Sections ( 临界区)

How do we avoid race conditions? The key to preventing trouble here and in many other situations involving shared memory, shared files, and shared everything else is to find some way to prohibit more than one process from reading and writing the shared data at the same time. Put in other words, what we need is mutual exclusionsome way of making sure that if one process is using a shared variable or file, the other processes will be excluded from doing the same thing. The difficulty above occurred because process B started using one of the shared variables before process A was finished with it. The choice of appropriate primitive operations for achieving mutual exclusion is a major design issue in any operating system, and a subject that we will now examine in great detail.

The problem of avoiding race conditions can also be formulated in an abstract way. Part of the time, a process is busy doing internal computations and other things that do not lead to race conditions. However, sometimes a process may be accessing shared memory or files. That part of the program where the shared memory is accessed is called the critical region or critical section. If we could arrange matters such that no two processes were ever in their critical regions at the same time, we could avoid race conditions.

Although this requirement avoids race conditions, this is not sufficient for having parallel processes cooperate correctly and efficiently using shared data. We need four conditions to hold to have a good solution:

a | No two processes may be simultaneously inside their critical

regions. |

b | No assumptions may be made about speeds or the number of

CPUs. |

c | No process running outside its critical region may block

other processes. |

d | No process should have to wait forever to enter its critical

region. |

3. Enter Section

4. Exit Section

5. Remained Section

6.

互斥可以看成为一种同步,是一种特殊的同步关系。

同步应遵循的规则

空闲让进、忙则等待、有限等待、让权等待